By Alex Campanella and Marcus Colby

We often see that when elite sports and military organizations are trying to optimize human performance, they gravitate toward complex analytics, advanced technology, and experimental sports science techniques. In doing so, many are trying to walk before they can run because they haven’t mastered the fundamentals of data collection, management, and presentation. We’ve come up with some steps to help groups establish more standardized and consistent processes, make better use of the data they already have, and deliver small wins now.

The Case for Fundamentals First

In a sports performance department, there are typically two main goals. First, athlete availability. What can we do on the training front to ensure we’re developing robust athletes who are consistently available to perform at a high level? Second, what’s the best combination of training, tactics, and strategy on the performance side that will allow us to excel and win big games?

Both areas involve complex problems and challenges. To try and solve them, some organizations turn to the data techniques we see in finance and business, but these require a lot of information to be reliable and accurate, and for the most part, sports science isn’t there yet because the data sets are too small. It’s better to put simple, fundamental data processes in place that can deliver basic but meaningful insights. Then be patient enough to play the long game, rather than hoping for a golden ticket that gives you everything you want right now.

We frequently have conversations with teams that want to improve injury surveillance using a big data system or some advanced modeling method. Our immediate question is, “Can you show me right now what kind of injuries are occurring, when, and how?” Nine times out of 10 they can’t do that because they’re skipping the fundamental best practices for capturing injury details. So before they move on to more advanced sports science, they should go back to basics and start checking the boxes for collecting fundamental injury data. For example, a team doctor or physio inputting the date and type of injury and how it happened as close to the moment it occurs and entering mechanism notes to give greater context.

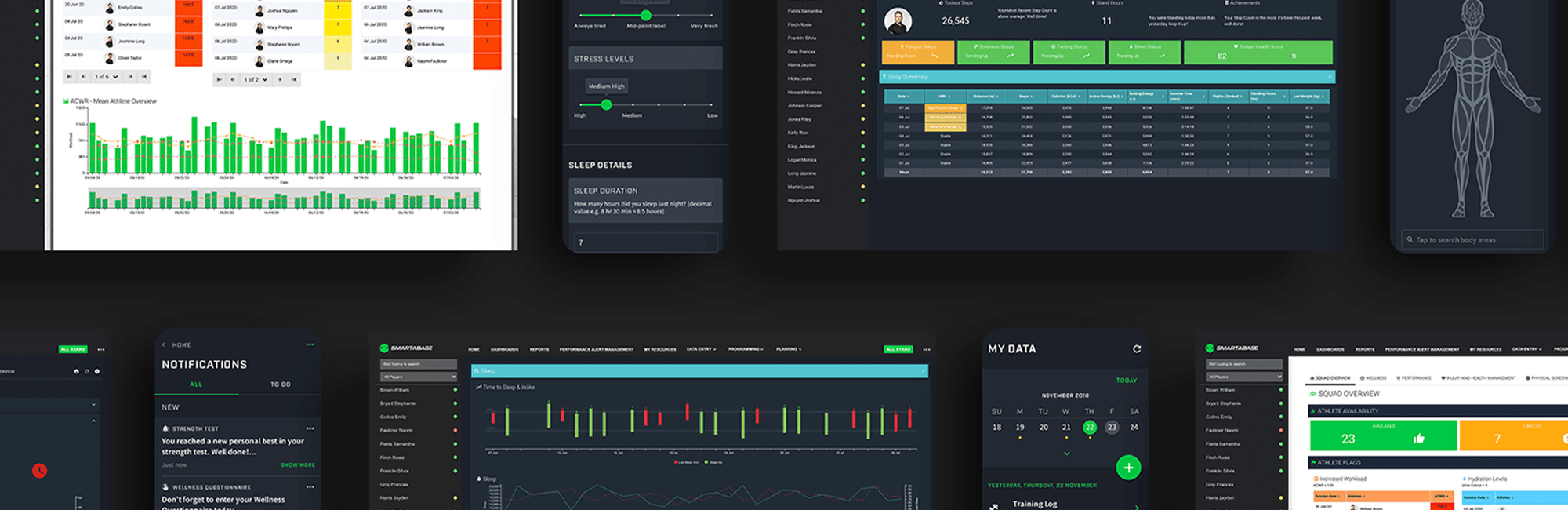

Even with a powerful human performance optimization (HPO) platform like Smartabase, you cannot hope to create a useful injury risk or readiness profile, or increase athlete availability without very clean observational data that lets you know exactly what’s going on. Once you have this, then you can identify possible risk factors, flag them, and make changes to athlete preparation and recovery. But you have to start at the beginning by collecting clean, high-quality information and managing it in a logical way.

Standardizing Data Collection and Management

Too often, data collection is performed with a scattershot approach, whether it concerns scheduling the data collection, the inputs used, or the types of information being gathered. For analytics to be impactful, there needs to be more standardization around which data is collected, when, and how.

It’s easy to implement some very basic collection methods with force plates and GPS systems and then use them to calculate simple metrics that are highly usable. For example, countermovement jump height can be established in the pre-season and used to monitor power output and neuromuscular readiness weekly from then on, while GPS data from daily training (like total distance covered and number of high-speed runs) can be compared against player, position group, and team averages from game day.

Another element of data standardization is understanding the schedule, knowing which kinds of information will be collected at certain times in the year, and deciding how this data will be used during player touch points. For example, your team might create baselines for force-velocity profiles, maximum sprinting speed, and vertical jump height during frequent pre-season testing, and then revert to testing these metrics weekly as soon as the in-season phase begins to use as a monitoring tool. Whereas player availability might be collected daily throughout all season phases to ensure there aren’t gaps in availability data when analyzing seasonal trends.

Once you have clarity around the big picture, it’s important to be intentional about the timing of when data will be gathered. Consider what times of day would be best to perform testing, screening, or monitoring and when these occur during a training week, as both can impact player performance and skew the numbers. Also take into account contextual factors that are happening around data collection, like whether players have just come off back-to-back games in the schedule, if they did their jump testing before or after the warmup or training session, and how soon you want to assess them after they return from an away game. These details can inform standardized, repeatable data collection practices.

The way you store data is also crucial. This can encompass the terminology your team uses, choosing one metric over another, and deciding which level of detail is best, even down to the number of decimal places you use for certain data points. It’s also worth noting that with firmware updates, vendors regularly change how they’re calculating variables. For example, some sleep tracker numbers like time in bed might stay the same, but the algorithm for sleep quality could be updated. You need to factor such changes into your data input and management approach so it doesn’t skew the numbers too much or create apples-to-oranges comparisons. This is also relevant when upgrading hardware and replacing one system with another. Reviewing the literature for standardized differences between technologies can help you make the required adjustments.

Increasing Data Usefulness with Consistency

Consistency is another crucial consideration in good data collection and management. You can put solid, standardized processes in place, but if you don’t stick with them, then you’ll limit their usefulness because you won’t have regular data coming into your AMS for analysis. Whereas if you have inputs feeding into the system continually, you can go beyond snapshot numbers and start doing longitudinal calculations, providing standardized Z-scores, creating acute-to-chronic ratios, and so on. These will help show progression or regression over time so you can put athletes’ performance into context for coaches and other stakeholders to take action when changes are significant.

One of the errors teams commonly make with data collection is switching things up too often. If you change your wellness questionnaire each year, how can you expect the data to be usable and comparable over multiple seasons? It’d be better to take a bit longer to decide which areas you want to ask your athletes about when first putting together the form initially, and then stick with these. The same goes for objective data sets captured from GPS and other systems. When you consistently collect athletes’ objective and subjective information, you will multiply the amount of data that’s available to be analyzed, expand on the number of techniques that you can utilize, and increase the usefulness of the data you already have.

Delivering Wins Through Simplified Information Sharing

Once you’re gathering high-quality data in a standardized and consistent manner, it’s vital to present it in a way that’s clear, concise, and actionable. Sometimes as practitioners who are passionate about sports science, we tend to want to do more. We might truly think that if only we could solve these difficult problems, we’d have a greater impact on the program. But in reality, complexity could just lead to information overload for the coach and players and become more of a distraction than it is an enabler. We can’t lose sight of the fact that the whole organization exists to help the head coach do their job – win. So before rushing off to collect a lot of data and then using it to create flashy reports, it’s helpful to ask the coach what information would be most useful to them.

For example, they might simply want to know how many minutes each athlete played or trained. This could seem overly simplistic, but if a single metric is what’s needed to make better decisions now, then it is useful. Providing simple numbers, letting stakeholders know if these are above or below normal output for the individual, and suggesting what they might want to do about it is often all that’s needed.

Consider how you can set your head coach up for success. Some organizations make the mistake of trying to do too much too soon, but it’s far more effective to get comfortable with just a few data points that can inform coaches’ decisions than for the sports science team to get spread too thin and end up not doing anything well. If you can provide small wins, their curiosity might start a domino effect, where they go from just wanting total minutes to wondering why something happens in a certain quarter of the game.

It’s not data itself that influences availability, performance, and other main goals, but how it’s used and followed up on. Establishing good, clear communication channels and sharing information across the organization will improve collaboration. If you do that, then everyone is on the same page and there’s a unified vision for the way you work with your athletes and the coaches who guide them. This should ultimately lead to higher availability and better performances. Rather than trying to create a data multiplying effect with the latest sports science techniques, first consider how you could better communicate takeaways from observational data that you already have.

Just as it’s key to good data collection and management, consistency is also applicable to reporting and information sharing. Conveying insights and takeaways to coaches and practitioners on a regular basis makes it easier for them to integrate data-backed decision-making into practice planning, scheduling, and daily workflows and to work together collaboratively in improving athlete performance.

Improving Athlete Buy-In

Creating consistent and standardized data collection, management, and reporting practices will benefit your athletes too. When players know when they’re expected to submit information, what will be done with it, and how it will be given back to them, it’s more likely they will comply with the data collection aspect of the performance program and build it into their regular routines. Establishing solid data management practices also positively influences athletes’ perception of care. If testing and other kinds of data gathering is random or infrequent, it creates uncertainty, and if information isn’t given back regularly, this can undermine trust.

Whereas when athletes know that they’ll be screened in the weight room twice a week, will submit a brief wellness survey each morning, and can expect a report at the end of each week, they will have more faith in the process and know that the people behind it have their best interests at heart. This will be enhanced when the sports science staff can tell clear stories about how data points relate to game day performance, overall wellbeing, and, when an AMS also serves as the electronic medical record (EMR), medical treatment too.

We need curiosity and evolution in the sports science space, but this can’t supersede fundamental data management practices that assist coaches’ daily operations because that’s how you win now with what you already have. Project-based work is one way to continue expanding your skillset while becoming brilliant at the basics of data collection, management, and presentation. The better you get at communicating actionable insights from simple data, the more able you’ll be to explain takeaways from more complicated concepts in a way that your audience can understand and apply.