By Florencio Tenllado

When human performance (HP) organizations hire staff for their analytics teams, their ideal candidates would have a combination of technical expertise and deep domain knowledge. But in reality, most applicants are more competent on one side than the other. So why should a hiring manager consider prioritizing data skills, what order should data analysts, engineers, and other roles be hired in, and how can individuals who lack domain expertise fill in their gaps? Let’s try to answer these questions.

In elite sport environments – such as national sport institutes or professional sport teams – there are plenty of experts in the human performance domain, from sport scientists (like I was in a previous role), to strength and conditioning specialists, to the coaching staff. Although HP professionals work with data on a daily basis, they may not necessarily have the data and analytics-related skillset (computer science and IT, math and statistics, infrastructure, visualization, etc.) to unlock the full potential of the data available to them.

So, when an organization decides to extend its HP program to include analytics, it often makes sense to hire someone with a background in data and analytics. Bringing in someone who is more of a subject matter expert or a person from within the organization could work too. However, hiring someone with expertise in data and analytics and creating the right environment for them to collaborate with the abundance of experts in the human performance domain across the organization would likely deliver the best results.

Getting Up to Speed with Collaboration and Immersion

Whether your data and analytics team is structured using a centralized, or hybrid model, I recommend that new hires frequently meet with HP professionals (sport scientists, coaches, etc.) as well as with members of the their own group. By spending time with other colleagues in the data and analytics team, they get to learn about the processes the team utilizes to tackle new data projects, and the infrastructure (tools and technologies) they leverage to work with the available data.

By spending time with HP professionals, they get to know more about the organization and about what each role on the HP side does. It’s also a great opportunity to grasp the language that is particular to the sport, understand some of the data points that are collected, and see how these are connected to the goals the coaching staff are aiming for. This could be achieved by attending practices, going to games, asking the coaches questions you may have, and reading sport-specific books or research papers.

When I was a sport scientist, I worked with athletes from very disparate sports such as tennis, field hockey, and rifle shooting, among others. Although I am a trained professional in the human performance domain, I was not necessarily equipped with the sport-specific knowledge when I first got involved with each sport. It was only after spending time with coaches and HP professionals, and learning about the sport or discipline, its demands, and the physical and mental traits that characterized successful athletes that I was able to contribute towards enhancing their performance. This kind of collaboration is key to bridging the knowledge domain gap and enabling data and analytics professionals to solve human performance-specific challenges.

Whether someone is a domain expert or a data and analytics specialist, adaptability is key if you are working in an environment where you must cater for athletes across multiple sports, such as sports institutes, Olympic committees, or universities/colleges. In these instances, using a standardized methodology could assist you with tackling seemingly disparate and complex problems.

Applying the CRISP DM Process Model

One of these methodologies that can be applied to every data project and that I have found most useful is CRISP-DM (Cross Industry Standard Process for Data Mining). This is a six-stage framework that is as useful in human performance as it is in the business world it came from. According to a Data Science Process Alliance article, these stages are:

- Business understanding – What does the business need?

- Data understanding – What data do we have/need? Is it clean?

- Data preparation – How do we organize the data for modeling?

- Modeling – What modeling techniques should we apply?

- Evaluation – Which model best meets the business objectives?

- Deployment – How do stakeholders access the results?

The first phase, business (or problem) understanding, is the most crucial when attempting to bridge the gap between data and analytics and domain expertise. Any good project starts with a deep understanding of the customer’s needs and what exactly they want to accomplish by the end of it. It is in this phase when the data and analytics professional sits down with subject matter experts and begins to translate an HP-specific problem into a data problem. In my experience, if I have a solid understanding of the problem, I find it easier to move throughout the rest of the process and come out at the end of it with a valuable outcome for the stakeholders. Rushing through this phase often translates to spending time answering the wrong questions and having to go back to the whiteboard.

The domain knowledge gap can be bridged more quickly and effectively by bringing domain experts with you along each step of the CRISP-DM process. For example, queries may arise when you are identifying, collecting, and analyzing relevant data sets (Phase 2: Data Understanding). Something may not make sense with the data and upon discussing it with the domain expert, you uncover some caveats that explain what you initially believed to be inconsistencies in the data set. Without their help, this kind of insider knowledge is difficult to obtain. The same approach applies to other phases of the CRISP-DM methodology.

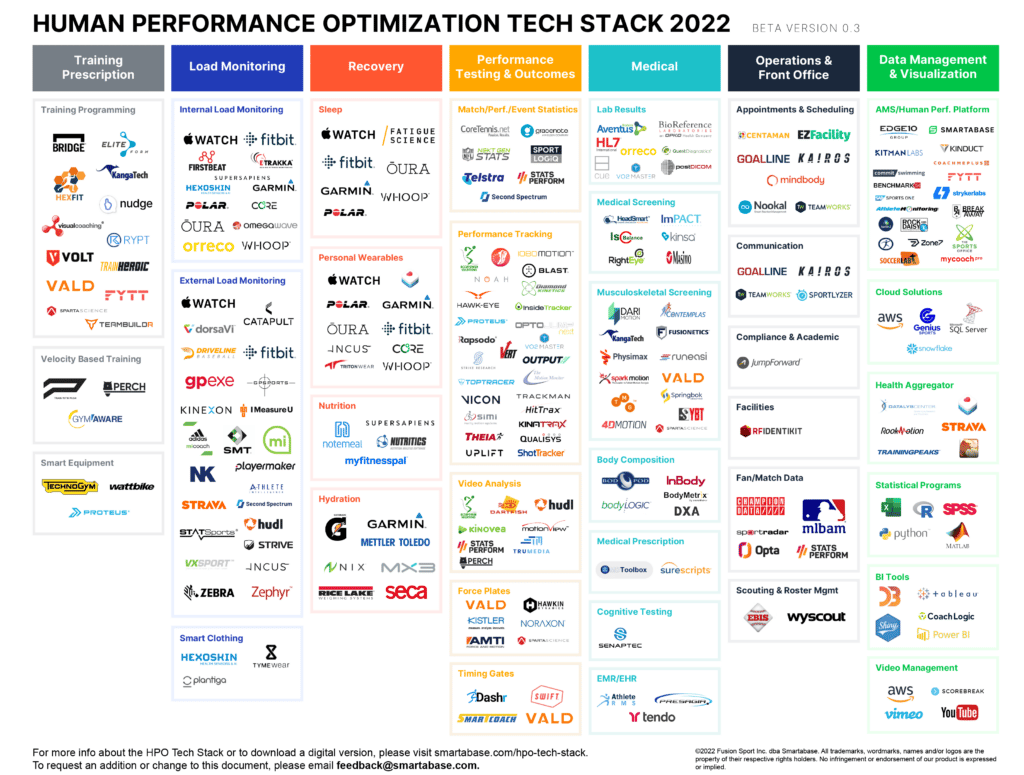

The Modern Analytics Stack

Data is the most valuable asset for data and analytics professionals, and over the last 10 years, there has been an explosion in the amount of information available to them. While this creates a fantastic opportunity for data and analytics professionals to generate value in this industry, it does not come without challenges. Data is often sparse across multiple sources belonging to proprietary technologies that may or may not provide access to this data for analysis outside said tool. This poses a challenge for data and analytics professionals, who often spend a considerable amount of time trying to bring data into a centralized repository that they can query for analysis and reporting.

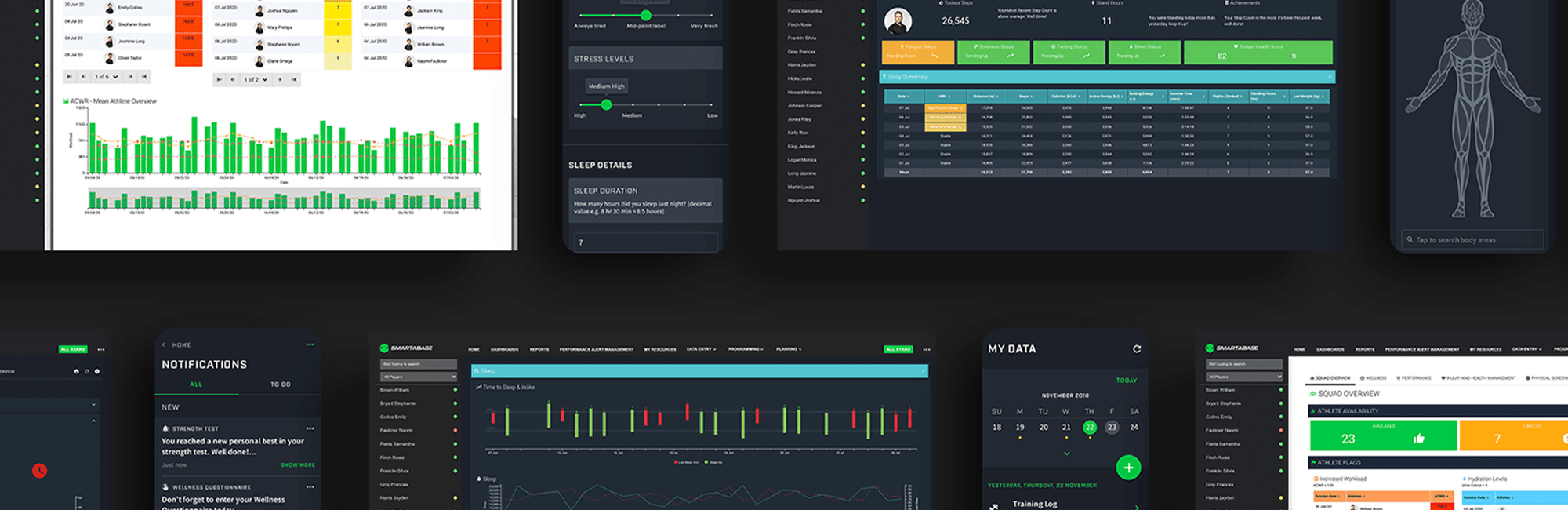

Fortunately, certain tools can help the data and analytics team get up to speed quicker. For example, rather than wasting time trying to extract data stored in many different silos (such as databases, spreadsheets, and vendors’ proprietary systems), it can be united in a single platform with an athlete management system (AMS) such as Smartabase. This can bring together GPS metrics from practice, game stats, performance testing, physical screening, and many other types of information, making it easier to grasp all the data the organization collects.

Furthermore, Smartabase offers out-of-the-box integrations such as the Analytics Connector which provides an efficient solution for continuously transferring and synchronizing all your Smartabase data to your database (MsSQL, MySQL, PostgreSQL, or Redshift). Once this has been achieved, you can use your preferred scripting language (R, Python, etc.) or BI tool (Power BI, Tableau, etc.) to query this data. If you do not have access to or want to maintain an external database, you could still pull data directly from Smartabase into your preferred R environment using the R Connector. This is a library for R that allows you to export/import data from and into Smartabase using a minimal amount of code.

Tiered Hiring Strategy

When it comes to deciding who to hire first for your data and analytics team, there is not a single strategy that would ensure a 100% success rate. Instead, it depends on several factors, such as: What kind of projects will your team work on? How easily available is the data in your organization? What is your hiring budget? What is your organization data maturity level?

Learning about the `Data Science Pyramid of Needs` framework, introduced by Monica Rogati in 2017, is key to understanding why you should prioritize hiring for some roles before others. This framework not only lists the stages of the Data Science process, but also explains why it is not possible to advance further in the process without fulfilling all the previous stages. But what does this really mean?

Organizations turn to data scientists to build forward-looking predictive models to give them an edge against their competitors in areas such as talent identification, recruitment, etc. In the Data Science Pyramid of Needs, this type of work (testing, modeling, and research) sits at the top of the pyramid. What often gets overlooked is that the accuracy of a prediction model largely relies on the availability of abundant and reliable historical data; an area where data analysts play a key role – this is the middle part of the pyramid. Lastly, the job of the data analyst could not be possible if there were no systems and processes in place to capture, store, and make the data available for analysis in the first place, which is the responsibility of data engineers.

By now, you probably realize the importance of understanding this framework when deciding who you should hire first. Does this mean you need to hire a Data Engineer first? Not necessarily. If your organization is already leveraging an AMS like Smartabase that brings a vast array of data sources into one system and it allows you to query this data using your preferred analytics IDE or BI tool, your data analyst or data scientist will dedicate most of their time building reports or predictive models (and bringing value to your organization), rather than struggling to access the raw data.

Creating a Blueprint for Smaller HP Analytics Teams

Even in countries that are leading the charge in human performance like Australia, the resources allocated to establish new data and analytics teams can often be limited. If this is the case in your organization, hiring a ‘generalist’ data and analytics professional who can leverage some knowledge about infrastructure (AWS, Azure, etc.), ETL tools (Fivetran, Airbyte, etc.), programming (SQL, R, Python, etc.), and visualization tools (Power BI, Tableau, etc.) would make sense. They will be able to set the foundation to build on and provide some early return on investment that could trigger the further growth of the team.

Remember to create the right environment for your team members to engage with HP domain experts and learn about their problems. If you have limited resources, try to focus on a small number of highly targeted projects that can portrait the value your team can bring to the organization. This is a great blueprint for any HP analytics team to follow in its early days: combine technical expertise with domain knowledge, turn performance problems into data ones, and then master one or two specific areas. This will allow you to find easy wins that demonstrate the true value of data analytics to the rest of the organization and get buy-in from coaches and others who can become advocates for your HP program moving forward. Such victories do not have to involve fancy techniques or sophisticated models but can be as simple as getting people the data they need, when they need it, in a format they can use.

If You Enjoyed This Article, You Might Also Like…

- GUIDE: Building Your Human Performance Analytics Team

- How US Ski & Snowboard Built an Analytics Team to Support High Performance

- Should You Outsource Your Human Performance Analytics Team?

- Using Archetypal Analysis for Team Training, Selection, and Cohesion

- Tips for Integrating Your Human Performance Platform with Analytics Tools